When & How To Run An A/B Test On Your Website Using Google Optimize: Data Driven Daily Tip #210

WATCH

LISTEN

READ

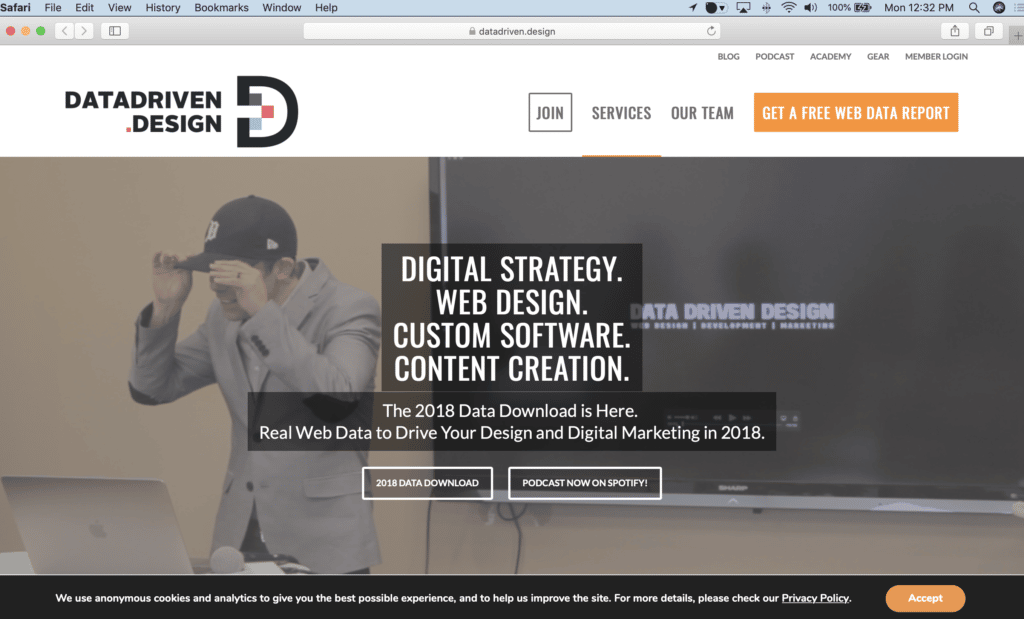

We're redesigning our logo. We can't decide which logo to pick between the final two options. This is obviously a very important decision for our company and we've gathered feedback and input internally and gone through a couple rounds of strategic revisions to get to this point. Sound familiar?

Well, instead of simply using our opinions, since we believe in Data Over Opinions, we're going to get a couple key data points. One, is going to be a Facebook Poll, and we'll possibly run an Ad or two to get some additional feedback.

But the real interesting data point will be how people actually interact with our website based on which logo is shown.

This means, we're going to run an A/B Test on our website, using Google Optimize.

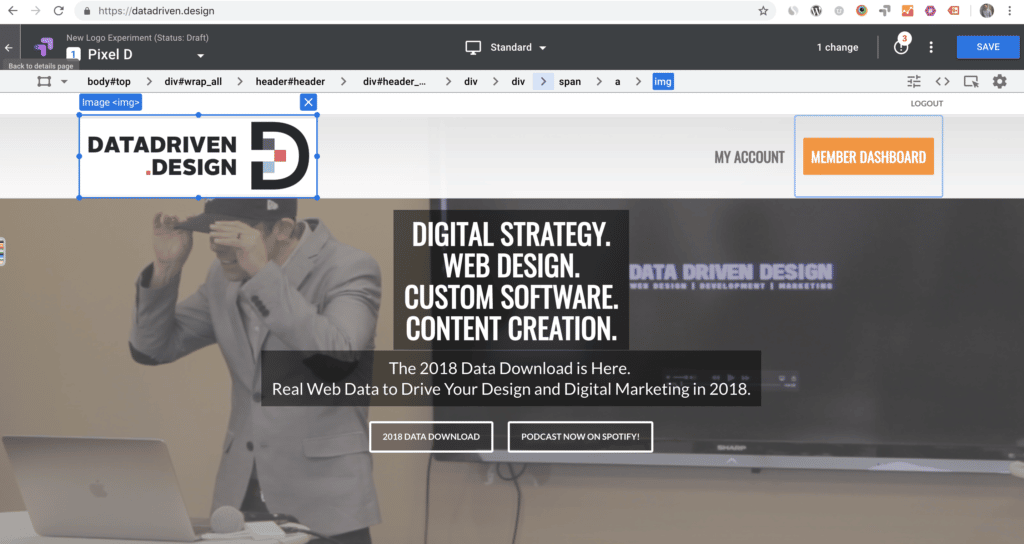

Optimize will serve our current (Original) logo to one-third of the web users that hit DataDriven.Design, serve new logo option 1, which we're calling "Pixel D" to another third of our web users, and serve new logo option 2, which we're calling "Smooth DD" to the other third of our web users.

This will be done randomly, and this video shows the step-by-step process of how to use Google Optimize to set up an experiment.

It walks you through how to not only create variants, but also how to set up objectives so that you get key data to make your decision.

A/B Testing like this should be done to a certain extent on every website, testing colors, imagery, calls to action and more.

We always recommend only doing one A/B test at a time per page, and running the test for at least a week to a month (depending on how much web traffic your site gets) before looking at the data to declare a winner.

Objectives on you experiment are extremely important. They show you which variation of your experiment "won" and why.

For example, you can set objectives based on eCommerce transactions, Google Analytics goals, or pre-set objectives within Optimize, like which variation keeps users on the page the longest, which creates the least amount of bounces, and more.

We will definitely have more content on how to read your experiment result reporting and make data driven decisions based on those reports, but for now, you're off and running with Google Optimize installed on your website.

For how to actually set up Google Optimize on your website, please see Data Driven Daily Tip #209.

Thanks for reading, watching and listening, and have a great day!

KEEP MARKETING!

Paul Hickey, Founder / CEO / Lead Strategist at Data Driven Design, LLC has created and grown businesses via digital strategy and internet marketing for more than 10 years. His sweet spot is using analytics to design and build websites and grow the audience and revenue of businesses via SEO/Blogging, Google Adwords, Bing Ads, Facebook and Instagram Ads, Social Media Content Marketing and Email Marketing. The part that he’s most passionate about is quantifying next marketing actions based on real data.